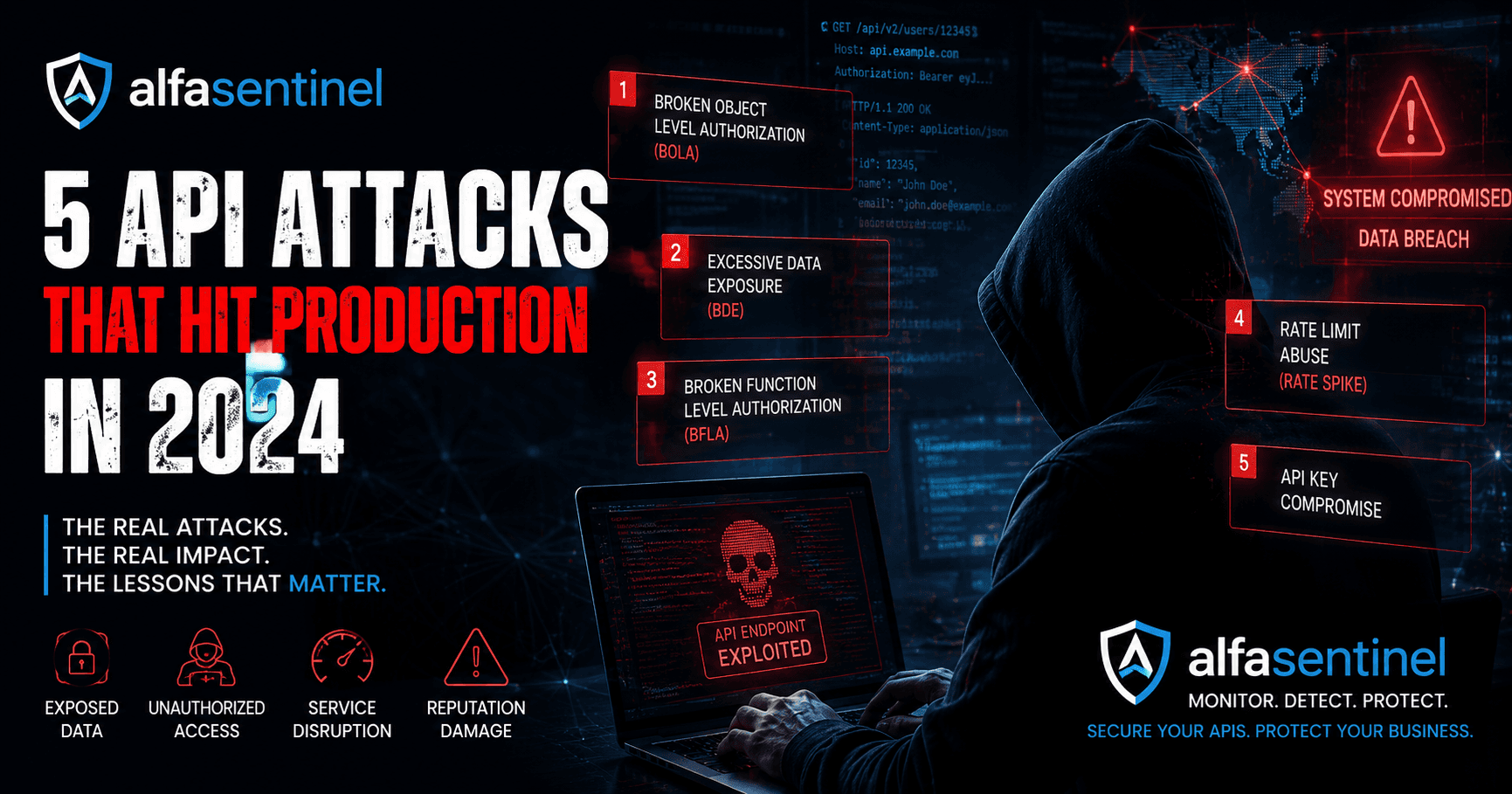

The 5 API Attacks That Hit Production in 2024

The 5 API Attacks That Hit Production in 2024

Published by: Alfasentinel Security Team

Category: API Security | Reading time: 8 min

Target keyword: API attacks production security

API security incidents don't announce themselves. They show up as anomalies in your logs weeks after the fact, in a data breach disclosure you read on HN, or — if you're unlucky — as a phone call from a customer wondering why their account data appeared somewhere it shouldn't.

2024 was a particularly instructive year for API security. Several high-profile incidents followed patterns that security teams should now be treating as baseline threats, not edge cases. This article breaks down the five most impactful API attack patterns from the past year, what made them succeed, and what would have stopped them.

1. Mass account takeover via credential stuffing at the API layer

What happened:

Multiple fintech and e-commerce platforms saw coordinated credential stuffing campaigns targeting their authentication APIs directly — bypassing login UIs entirely. Attackers used lists of breached username/password pairs from unrelated leaks and fired them at /api/v1/auth/login endpoints at scale.

Why it worked:

Traditional rate limiting was applied at the application layer, not the API layer. Attackers distributed requests across thousands of IPs, keeping each one just below the per-IP limit. The API had no awareness of the aggregate pattern.

What the traffic looked like:

A single API endpoint receiving 50,000 requests over 4 hours, distributed across 3,200 unique IPs, with a success rate of approximately 0.3% — enough to compromise hundreds of accounts.

What would have stopped it:

Behavioral baseline monitoring at the API level. Rather than a static rate limit, a system that detects when an endpoint's aggregate failure rate spikes above its historical norm — even across distributed source IPs — catches this pattern in minutes rather than hours.

Detection signal: Sudden spike in 401/403 responses on an auth endpoint, distributed across many IPs, with no corresponding spike in legitimate logins.

2. BOLA exploitation for data harvesting

What happened:

Broken Object Level Authorization (BOLA) — OWASP API Security #1 — continued to be the most reliably exploited API vulnerability of the year. In multiple documented incidents, attackers authenticated legitimately and then enumerated object IDs to access records belonging to other users.

Why it worked:

The requests were authenticated. The parameters were valid. The API returned data it was designed to return — just to the wrong person. No injection, no privilege escalation, no malware. Just sequential integers in a URL parameter.

What the traffic looked like:

GET /api/accounts/100441 → 200 OK (attacker's account)

GET /api/accounts/100440 → 200 OK (another user's account)

GET /api/accounts/100439 → 200 OK (another user's account)

GET /api/accounts/100438 → 200 OK (another user's account)

This pattern continued for hours before detection.

What would have stopped it:

BOLA detection requires understanding the relationship between an authenticated session and the objects it accesses. A monitoring system that tracks which object IDs each session has accessed and flags sequential enumeration patterns — especially across ID ranges not associated with that user — catches this before significant data is harvested.

Detection signal: A single authenticated session accessing a high volume of distinct object IDs in numeric sequence, with IDs not historically associated with that user.

3. Shadow API exploitation via forgotten internal endpoints

What happened:

Several API breaches in 2024 involved attackers exploiting endpoints that didn't appear in any official API documentation and weren't covered by standard security tooling. These were legacy endpoints from old API versions, internal debugging routes, and endpoints from third-party integrations — all still live in production.

Why it worked:

Security teams tested and protected their documented API surface. The shadow endpoints — often returning the same data as documented endpoints but with weaker or absent authorization checks — were effectively invisible to security tooling because nobody knew they existed.

What the traffic looked like:

Attackers who had done prior reconnaissance (often using automated API discovery tools) made direct requests to undocumented endpoints, bypassing auth checks that only existed on the documented equivalents.

What would have stopped it:

Monitoring every API request, not just requests to known endpoints. A system that tracks requests to paths with no corresponding traffic in historical baselines surfaces shadow endpoints the moment an attacker probes them — effectively turning attacker reconnaissance into your own discovery mechanism.

Detection signal: Requests to paths with zero prior traffic in baseline, especially if they return non-404 responses.

4. API scraping via rate limit evasion

What happened:

Data scraping at scale has become increasingly sophisticated. Rather than hammering endpoints at high rates, modern scrapers use distributed infrastructure to stay just below rate limits while extracting large volumes of data over extended periods.

A documented pattern from 2024:

A travel data API was scraped for real-time pricing data by a competitor. The scraper ran from 200+ cloud IP addresses, each making fewer than 100 requests per hour — well below the per-IP rate limit of 500/hour. Over 30 days, approximately 43 million pricing records were extracted.

Why it worked:

Per-IP rate limiting is necessary but insufficient. It doesn't detect distributed scrapers because the threat is invisible at the individual IP level.

What would have stopped it:

Aggregate behavioral analysis. A system that detects when an endpoint's total traffic volume exceeds its historical baseline — regardless of how many IPs are contributing — and correlates behavioral signals like identical user agent strings, consistent request timing, and narrow endpoint focus.

Detection signal: Aggregate request volume spike on a data-returning endpoint, with high uniformity in request structure and timing across many IPs.

5. Business logic abuse via API parameter manipulation

What happened:

API business logic attacks don't exploit code vulnerabilities — they exploit gaps between what the API is designed to do and what it actually prevents. In 2024, several e-commerce and financial APIs were abused through parameter manipulation: negative quantities in order APIs, unusual decimal values in currency fields, sequential transaction ID guessing.

A specific incident type:

A promotions API that accepted coupon codes didn't validate that a coupon could only be applied once per user at the API level (validation existed in the UI but not the API). Attackers scripted bulk coupon redemption, extracting significant monetary value before the issue was detected.

Why it worked:

Business logic validation lived in the frontend, not the API. The API itself accepted any valid-format request.

What would have stopped it:

Anomaly detection on request parameter patterns and endpoint usage. A user making 300 requests to a promotions endpoint in an hour — each with a different coupon code — is a behavioral pattern that stands out sharply from normal usage.

Detection signal: High request volume to a specific business-function endpoint from a single authenticated user, especially with high parameter variation.

The common thread

Five different attack types. One common failure: none of them would have been visible to a monitoring system watching only for traditional threat signatures.

Credential stuffing that stays below per-IP rate limits. BOLA attacks using valid credentials. Shadow endpoints that don't appear in documentation. Scrapers distributed across hundreds of IPs. Business logic abuse using valid parameters.

What these attacks have in common is that they're only visible as behavioral anomalies — deviations from established baseline patterns that no individual request makes obvious.

The shift in API security posture that 2024 demanded is from signature-based detection to baseline behavioral monitoring. The question isn't "does this request look malicious?" — it's "does this pattern of requests deviate from what we've established as normal?"

What to check in your own APIs

Before your next sprint, it's worth a quick audit:

Auth endpoint failure rates — are you seeing elevated 401/403 rates on any endpoint over the past 7 days?

Object ID access patterns — do any authenticated sessions access unusually wide ranges of object IDs?

Undocumented endpoints — does your API monitoring cover requests to paths that aren't in your API spec?

Aggregate rate patterns — are you monitoring total endpoint traffic volume, not just per-IP rates?

Business logic endpoints — do any high-value endpoints show usage patterns dramatically different from the median user?

These five checks won't catch everything. But they'll catch the five attack types that caused the most damage in 2024.

ApiSentinel monitors all four of these behavioural patterns in real time across your API surface — RateSpikeDetector, BOLADetector, ShadowAPIDetector, and BruteForceDetector. The free tier covers up to 10,000 API calls/month. Get started →